© 2024 Tales from Outside the Classroom ● All Rights Reserved

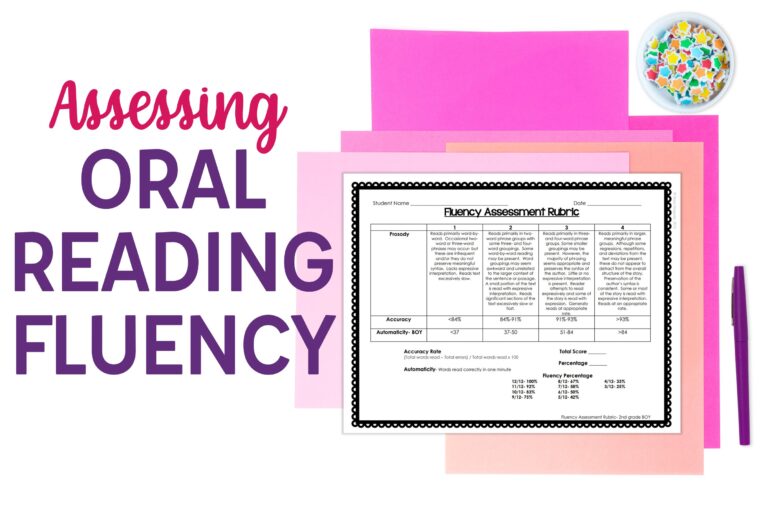

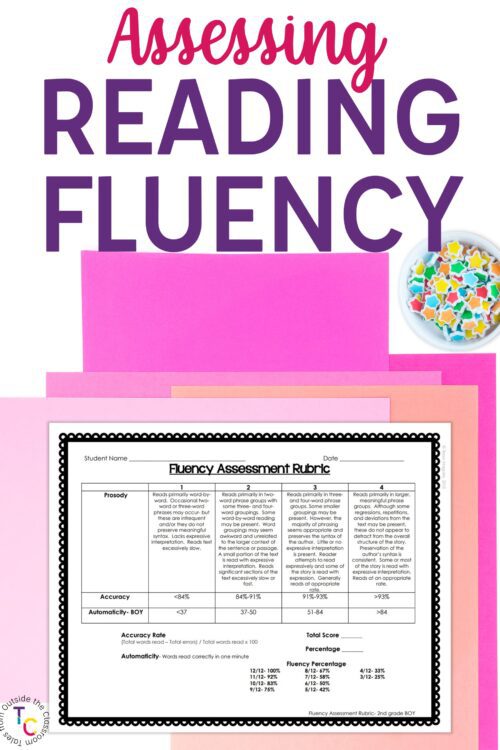

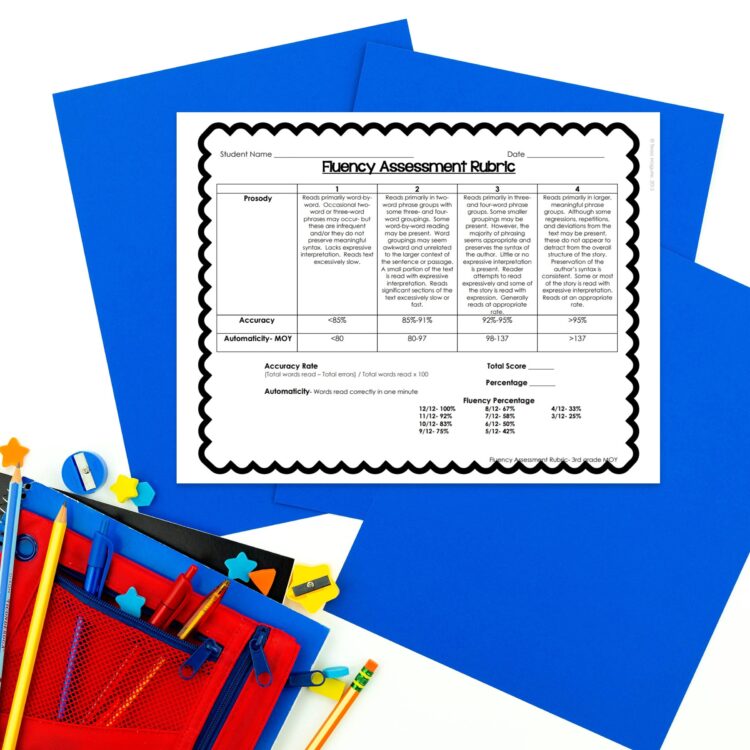

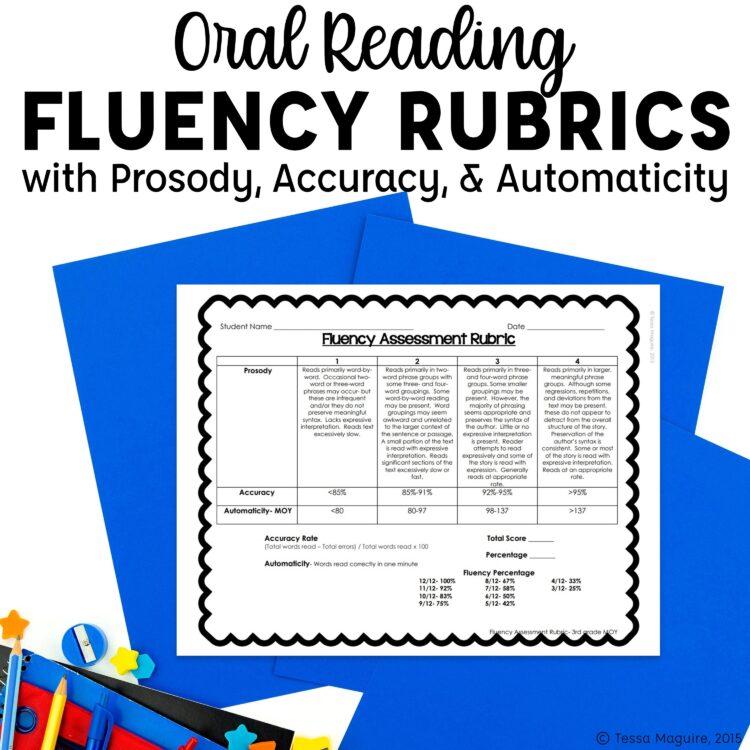

Oral Reading Fluency Rubrics for Assessments & Grading

Many, many years ago, I was a reading teacher in a K-5 building. We revamped our report cards that year to be very standards focused. Indicators based on the standards were broken apart into the report card and each received their own score which then made up the overall grade. One of the teachers came to me and said…”How do you grade fluency?” We talked things through and couldn’t come to a solid opinion on the best way. My oral reading fluency rubrics were born out of that question.

What are the components of reading fluency?

There are 3 well-researched components to reading fluency: accuracy, automaticity, and prosody. Each component encompasses a different area of reading.

Accuracy: Including self-correction, how accurate was the decoding or word recall

Automaticity: How many words were read correctly

Prosody: How does the student sound? Is there phrasing and expression?

Each of these components is a factor in a student’s overall reading fluency. Obviously, accuracy and automaticity are the most important from a decoding standpoint. But, comprehension can also be directly tied to prosody as meaning is often tied to phrasing and intonations.

So, if we’re being asked to give a grade for fluency (not saying we should, just that it’s the ask of many teachers), how do we do that?

How do you measure reading fluency?

To my knowledge, there’s never been a way to measure reading fluency that includes all three components of reading fluency, and that was my mission. I found resources that measured each skill in isolation and I researched things to the best of my abilities as a reading interventionist (not a researcher).

One of the first things I found was the NAEP Oral Reading Fluency scale. I was excited to find something that gave tangible characteristics for how fluent readers should sound- their prosody. But, of course, I didn’t think that should be the only thing students were rated on.

At the time, all of the K-2 teachers were using DIBELS. However, this was the original DIBELS (before the accuracy rate was included). The teacher didn’t want to give the students’ grades just based on accuracy. She contemplated giving a percentage based on the students’ automaticity in relation to the norm. However, as she described, that score in no way reflects students reading more than the target to determine intervention. So, basically, if that was used, students would have been considered fluent readers as long as they weren’t in need of intervention. And while that’s not a crazy thought, it in no way incorporates how students sound, or if they read really, really quickly and made a ton of mistakes. I didn’t have a good answer, so I took to the internet. And found nothing.

I took my knowledge of accuracy rates, the DIBELS automaticity benchmarks, and the prosody scale from NAEP and turned it into a 12 point oral reading fluency rubric. I then emailed the fluency guru, Dr. Tim Rasinski to see if I could get his input or if he had any recommendations. I fully expected no response. I mean, who am I? And he’s an innovator.

Guess what? He responded. Not only did he respond but he said that they were “really, really good”. I died. I printed out that email to have forever and then I died. All these years later, I have since misplaced that printed email amongst my many moves, and I’m a little bit sad about that!

Fast forward many years and several things have changed. First, DIBELS now gives benchmarks for accuracy rates for each grade level, and those have been adjusted over the years. Automaticity rates have also changed over the years with words read correctly expectations increasing quite a bit from the early years of Oral Reading Fluency (or ORF) assessments.

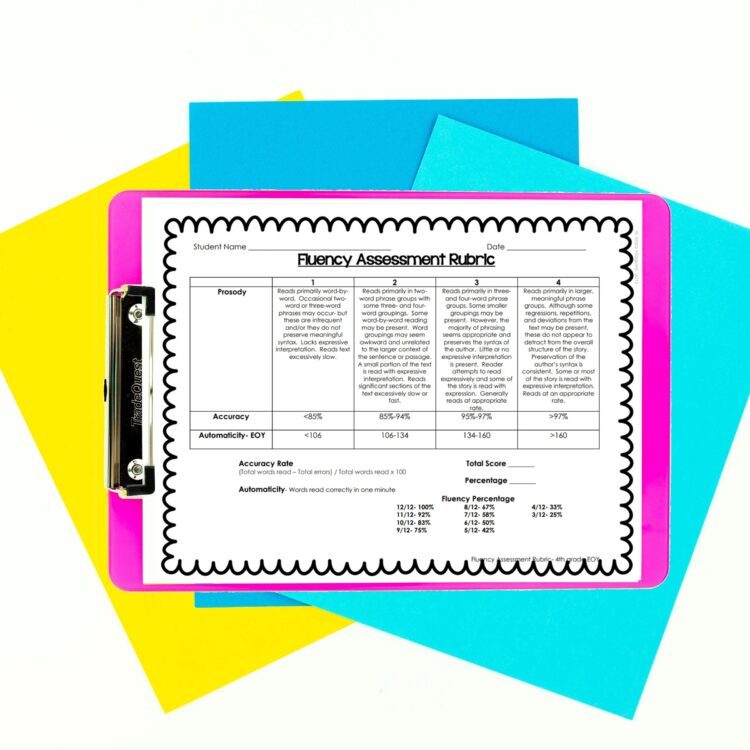

I’ve updated the reading fluency rubrics a few times over the years. Most recently, the updates reflect the 2017 Hasbrouck & Tindal Oral Reading Fluency Norms. Several years back I updated the accuracy scores to reflect DIBELS expectations. Since that time, they have since changed. Other than a few tweaks in 2nd and 3rd grades, I left the rubrics as-is. DIBELS includes 95% accuracy as proficient, and it should be. But they don’t have variation for students working above average. My rubrics include expectations of 96%, 97%, and 98% accuracy with older students.

There are different reading fluency rubrics for the beginning, middle, and the end of the year for grades 2-6. This coincides with typical diagnostic windows. Between those benchmarks, the rubrics can also be used for progress monitoring or formative assessment purposes using the rubric from the previous benchmark period.

How do you use the rubrics?

Fluency assessments are typically done on one-minute cold reads, meaning texts the students have not previously read. Many traditional basal curriculums include cold read texts, or other texts that could easily be used. A previous basal text I had access to provided new texts for the weekly reading assessment. These were texts I sometimes used for the cold read.

To administer, I ensure there is a blank master copy for students to read from. Then I have one copy of the text per student that I use as my recording form. I tell students the title of the text and ask them to read it aloud for me. I record student miscues, and indicate the stopping place when the timer is up. If you’re concerned that students would be distracted with the timer, you can also just indicate their stopping point while they continue reading. Once the timing is up, I calculate the number of words read correctly and the accuracy rate (number of words read correctly divided by number of words read in all). Then, I record them on the reading fluency rubric and total up the score.

With one strand of the Common Core standards tied to fluency (RF.4) for each grade level, it’s important to me to have a tool that accurately depicts students’ fluency so that I can report the information accurately to parents.

Click here to head to TpT to download the oral reading fluency rubrics for use for your students or your school for free.

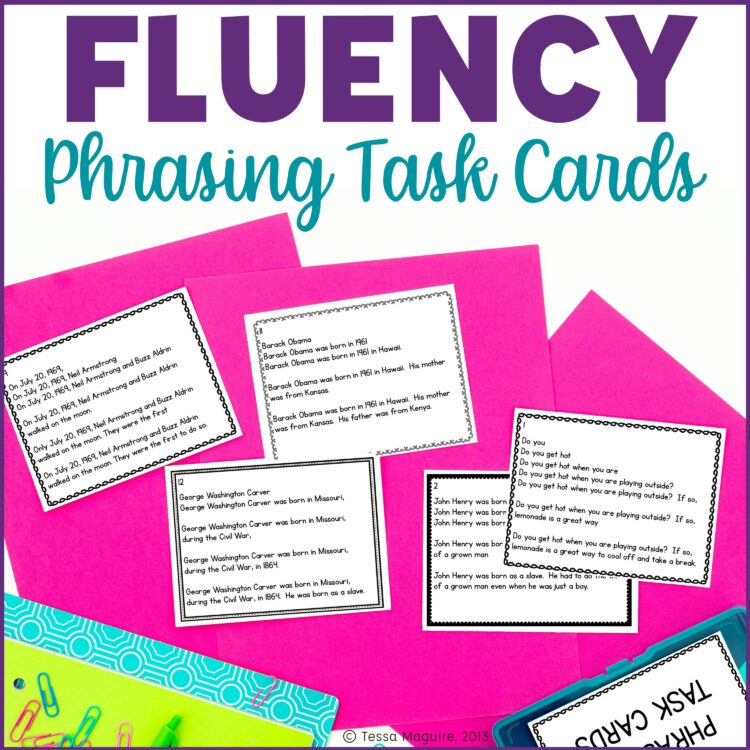

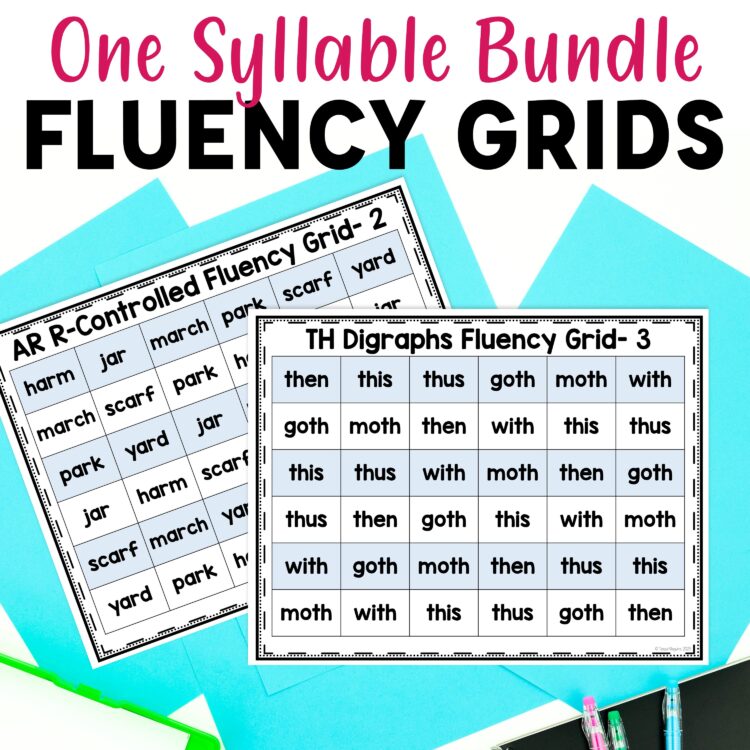

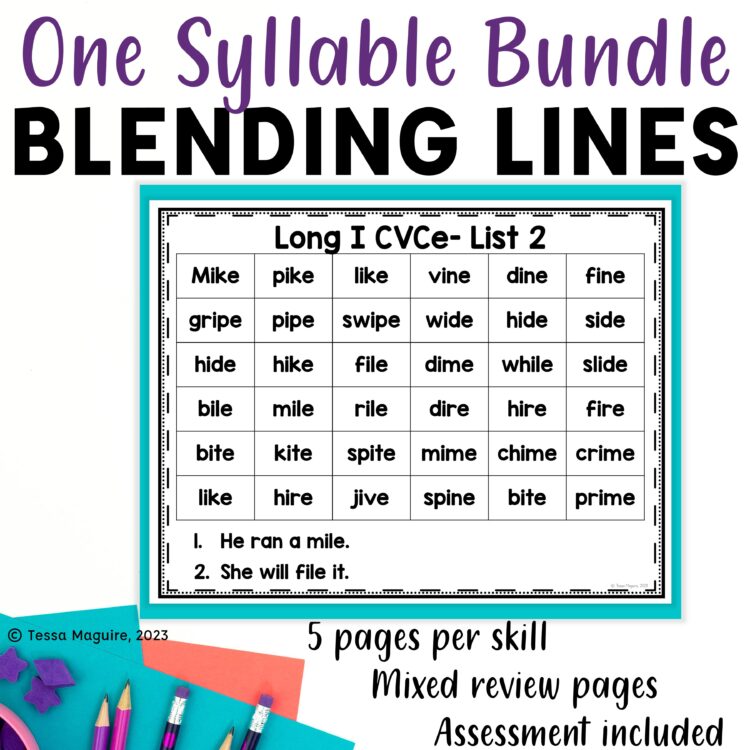

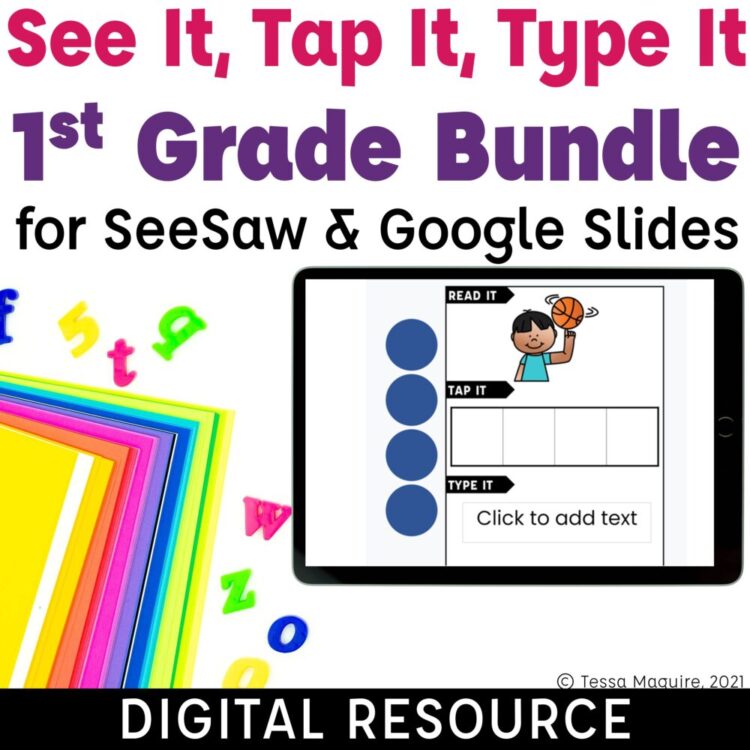

If you’re looking for reading fluency activities, check out the resources below.

Want to read more about fluency? You may enjoy reading A Close Look at Fluency where I start to unpack Dr. Rasinski’s A Fluent Reader.

Newsletter Sign Up

Signup for my weekly-ish newsletter. I send out exclusive freebies, tips and strategies for your classroom, and more!

Please Read!

You have successfully joined our subscriber list. Please look in your e-mail and spam folder for Tales from Outside the Classroom. Often, the confirmation email gets overlooked and you're night signed up until you confirm!

Hi! I’m Tessa!

I’ve spent the last 15 years teaching in 1st, 2nd, and 3rd grades, and working beside elementary classrooms as an instructional coach and resource support. I’m passionate about math, literacy, and finding ways to make teachers’ days easier. I share from my experiences both in and out of the elementary classroom. Read more About Me.

20 Comments

I really like these rubrics! We use the Dibels benchmark assessment for our report cards and I progress monitor frequently. I created a Fluency Recording Sheet to use in my Fluency Center along with a grade-level passage. I pair kiddos by ability and they take turns being the teacher. While they are the "teacher" they time the student for one minute and mark the student's copy of the passage and then help them fill out the recording sheet. They love it and it is quickly become one of their favorite centers. I love looking over and seeing the kids pretend to be me. They take it so seriously and they really try to help each other improve! I also will use this sheet in other ways such as daily partner reading or even in our reading groups to monitor progress throughout the week! (:

https://www.teacherspayteachers.com/Product/Fluency-Recording-Sheet-1668680

These are great!! I am a second year teacher and have struggled from day one, to assign a grade to fluency!

I hope these help!

These are great! Have you by chance made these to match the 2017 Hasbrouck and Tindal’s data? I would LOVE them or at least these files so I can edit them. Can you help me? carrn@columbiak12.com

I haven’t. Let me do some research and I’ll get back to you.

Great! Thank you!

Hello Tessa! Did you happen to update look at the 2017 fluency data charts to update these? My county is back at it with grading and have created a grading scale that DOESN’T even consider the ORF charts. I am so embarrassed they would roll something out without proper research, so I’m going to fight for the students to use something like your rubrics that will give a more accurate picture. No lie, a student that can read 120 words would get an 85/B with no account for prosody. 120 perfect words, perfect accuracy, perfect prosody would was an 85/B…. wow! So, if you have updated them to the 2017 ORF norms I woukd appreciate them or if you send me the editable file, I will do it and share back to you. Thanks so much for sharing this. I also reached out to Dr. Tim Rasinski. He agrees.

I have not yet. It was on my summer list and I haven’t gotten to it. I will try my very best to get to it this week and get back with you!

Hi Nicole, I have updated these with the 2017 percentiles. In the read first file, I explained how I correlated the percentiles and the 1-4 scoring. You can download them here: https://www.teacherspayteachers.com/Product/Oral-Reading-Fluency-Rubrics-for-Grades-2-6-1669110#show-price-update

You are amazing! Thank you!

Can I ask why you decided to add the percentiles into the mix? From all my research I’m seeing that we should be comparing to the 50%ile norms. So if a 3rd grader at BOY gets 83 words correct with prosody and accuracy, in grades that would be an equivalent to a perfect grade (if your county makes you grade). Anyone reading 10WCPM under that norm would need some intervention. Your excellent readers way above and beyond wouldn’t need biweekly progress monitoring and would essentially just earn their A when grading came around. So, I can tell you’re a researcher, so in all your searching did you find something that says we should expect students to be above the norm? Sorry to be so inquisitive, but grading fluency with no guidelines at my school is getting quick ridiculous. I want to do right by these kids.

For starters, I am absolutely not a researcher. I’m a teacher that tried to find a solution when the district asked us to grade fluency. I looked around as best I could, and read what I could, but definitely am not a researcher. I also didn’t change the rubric itself. All I changed was the words per minute expectations to use the newer rubric as you rightfully suggested. My mindset with the 50th percentile was that’s the average expectation for not needing intervention. I put the 50 mark at the 2, but I went back and forth thinking perhaps it should be a 3. If from what you’re seeing, you think that’s worthwhile, I am happy to make that change since I wasn’t confident with those placements. Are you suggesting it should b ea 4 isntead?

I originally made these many years ago. At the time, I took DIBELS scores and used them as my guide. If you scored green on DIBELS, I made that worth 10 additional wpm a 3 with the assumption that their scores reflect those students in need of intervention. Scoring just above that didn’t necessarily mean you were a strong reader, just that you weren’t struggling.

I am not 100% either because I’m a teacher also. My county seems lost and have made this ridiculous grading scale they expect us to use to grade students. I am under the impression from reading how the norms were created that the 50th percentile is “At the expected benchmark” which in my teacher mind is what we want. Them we increase the expected benchmark every “season”. I have reached out to our fluency guru as well. Last school year, we talked a few times and he agreed the 50th percentile is what we want. He totally disagreed with grading it, but when our counties force us, we have no choice.

I was just curious if you found info I haven’t run across. I’m trying to advocate for my students I’m the county, because not many people know much about fluency in the upper grades. If I’m going to grade my students, I want it to be accurate so I’m trying to get info anywhere I can. Can you believe us two teachers reached out to a man who has spent his life dedicated to fluency, but not one other person in my county had before roling out a grading system? Urgh!

This is the exact scenario these were born from. I was a little baby teacher in a literacy interventionist role and the district said that there should be a grade for the fluency standard but gave zero guidance. The teacher questioned what was best to use and after not finding anything that included all of the areas of fluency, these were born. I, of course, want things to be right though. I will continue to do some of my own research as well to see if I can find some more clarity. Your point makes sense, but I also was going with the mindset that the 50th percentile indicated a student wasn’t in need of intervention which is just “okay” in my mind. But I had previously used the DIBELS ORF scores.

I originally created these in Word. I’m happy to send you the original file if you want to update the accuracy scores. Are you interested in that?

And yeah, one of my biggest frustrations as a teacher is top-down directives that don’t make sense on the bottom, with no clarity or willingness to figure out the issue.

Hello,

These are great! Do they align with Dibels8? I am looking for fourth grade accuracy.

I’m not sure if they align to Dibels 8 as I do not have that system for comparison.